Overview

In defining Biology’s relation to other Natural Sciences, Warren Weaver, a pioneer of what we today would call Systems Biology, aptly characterized pre-1900 classical physics as largely concerned with two-variable problems of organized simplicity, post-1900 statistical physics as two-billion-variable problems of disorganized complexity, and Biology as wrestling with problems in the untouched great middle region spanning from two to an astronomical number of variables. The scope in Biology is therefore a set of problems that deal simultaneously with a sizable number of factors which are interrelated into an organic whole—i.e., problems of organized complexity.

These new problems, and the future of the world depends on many of them, requires science to make a third great advance, an advance that must be even greater than the nineteenth century conquest of problems of organized simplicity or the twentieth century victory over problems of disorganized complexity. Science must, over the next 50 years, learn to deal with these problems of organized complexity

— Warren Weaver (1948)

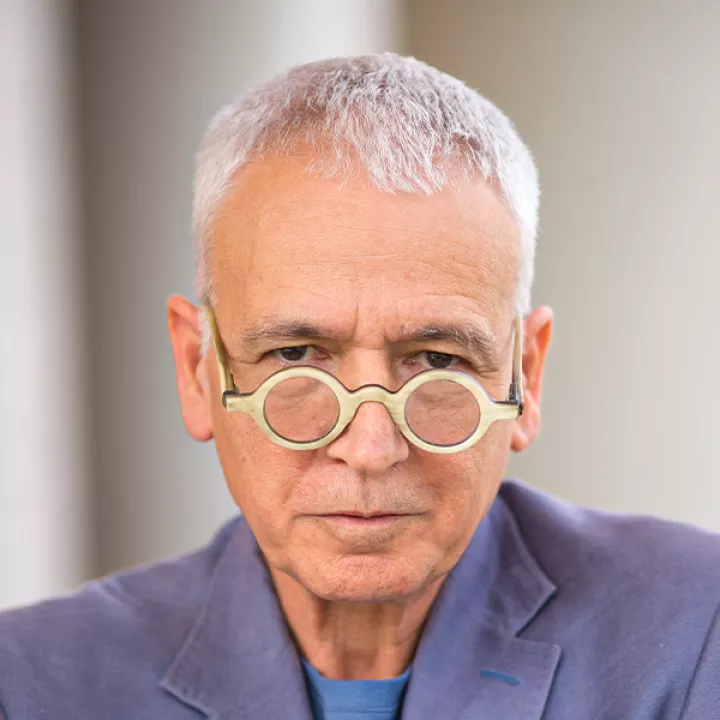

Aviv Bergman, Ph.D.

Chair, Department of Systems & Computational Biology

Mission

Albert Einstein College of Medicine is positioned to augment its current strength in exciting new directions. Through innovative research and education, The Department of Systems and Computational Biology focuses its efforts on advancing the understanding of living systems as a whole by promoting a new approach to biology that combines theoretical and experimental approaches aimed at explaining how the higher-level properties of complex biological systems materialize from the interactions among their parts.

Objective

The faculty develop research and education programs that embrace engineering, computational, mathematical, and physical sciences as an integral part of the Biological and Biomedical sciences, leading the foundation of a Systems and Computational Biology discipline. We seek to form an academic environment that benefits from, and respects the value of these existing disciplines. An important part of this fusion entails serious, sustained, and multidisciplinary research programs in pursuit of fundamental questions in Biology, ranging from the function of biological systems to an understanding of the evolution of life’s variations.

The main challenges currently facing Biology involve the understanding of biological phenomena at a holistic, systems-level without neglecting the reductionist, detailed information approach related to its individual components. This is in contrast to the current, more prevailing “massively parallel reductionism” view. That is, despite the availability of vast amount of information, the focus, nonetheless, remains the individual components. Recently though, the academic community has recognized, once again, the need for integrated, systems-level strategies that combine computational and experimental tools, as well as evolutionary-based inferences, in addressing these challenges. It is this integration that defines the department’s activity.

Contact Us

Albert Einstein College of Medicine

1300 Morris Park Avenue

Jack and Pearl Resnick Campus

Bronx, NY 10461

Administrator

Francesco Barca